env file or it will expose secrets that will allow others to control access to your various OpenAI and authentication provider accounts. It's recommended you use Vercel Environment Variables for this, but a.

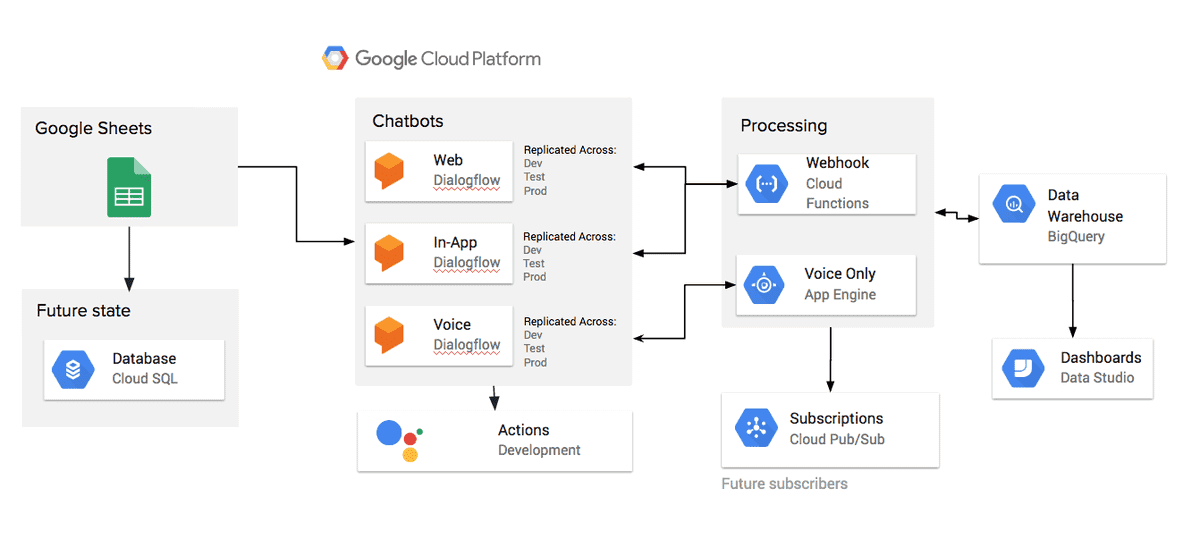

You will need to use the environment variables defined in. env file with the appropriate credentials provided during the KV database setup. Remember to update your environment variables ( KV_URL, KV_REST_API_URL, KV_REST_API_TOKEN, KV_REST_API_READ_ONLY_TOKEN) in the. This guide will assist you in creating and configuring your KV database instance on Vercel, enabling your application to interact with it. You can deploy your own version of the Next.js AI Chatbot to Vercel with one click:įollow the steps outlined in the quick start guide provided by Vercel. However, thanks to the Vercel AI SDK, you can switch LLM providers to Anthropic, Cohere, Hugging Face, or using LangChain with just a few lines of code. This template ships with OpenAI gpt-3.5-turbo as the default. Chat History, rate limiting, and session storage with Vercel KV.Radix UI for headless component primitives.Support for OpenAI (default), Anthropic, Cohere, Hugging Face, or custom AI chat models and/or LangChain.React Server Components (RSCs), Suspense, and Server Actions.array ( training ) # create train and test lists. append () # shuffle our features and turn into np.array Output_row = list ( output_empty ) output_row )] = 1 training. append ( 0 ) # output is a '0' for each tag and '1' for current tag (for each pattern) append ( 1 ) if w in pattern_words else bag. Pattern_words = # create our bag of words array with 1, if word match found in current patternįor w in words : bag. Pattern_words = doc # lemmatize each word - create base word, in attempt to represent related words Training = output_empty = * len ( classes ) for doc in documents : # initializing bag of wordsīag = # list of tokenized words for the pattern It is a way of extracting features from the text for use in machine learning algorithms. Tokenization - Tokens are individual words and “tokenization” is taking a text or set of text and breaking it up into its individual words or sentences.īag of Words - This is an NLP technique of text modeling for representing text data for machine learning algorithms. For example, if we were to stem the word “eat”, “eating”, “eats”, the result would be the single word “eat”. Stemming - This is the process of reducing inflected words to their word stem, base, or root form. For example “feet” and “foot” are both recognized as “foot”. Lemmatization - This is the process of grouping together the different inflected forms of a word so they can be analyzed as a single item and is a variation of stemming. Natural Language Processing(nltk) - This is a subfield of linguistics, computer science, information engineering, and artificial intelligence concerned with the interactions between computers and human (natural) languages. In this chatbot, we will use the rule-based approach. Generative models - This model comes up with an answer rather than searching from a given list.Retrieval-Based models - In this model, the bot retrieves the best response from a list depending on the user input.Self-Learning approach - Here the bot uses some machine learning algorithms and techniques to chat.

It is from these rules that the bot can process simple queries but can fail to process complex ones. Rule-Based approach - Here the bot is trained based on some set rules.There are two broad categories of chatbots: In this article, we will learn how to create one in Python using TensorFlow to train the model and Natural Language Processing(nltk) to help the machine understand user queries. A good example that everybody uses is the Google Assistant, Apple Siri, Samsung Bixby, and Amazon Alexa. Well, this is a personalized opinion where one has to do a cost-benefit analysis and decide whether it is a worthwhile project.Īt the current technology stand, most companies are slowly transitioning to use chatbots for their in-demand day-day services. But basically, you'll find them in: Help desks, transaction processing, customer support, booking services, and providing 24-7 real-time chat with clients. Where is it used?Ĭhatbots have extensive usage, and we can not expound on all the possibilities where it can be of use. Machine learning and algorithm knowledgeĪ Chatbot, also called an Artificial chat agent, is a software program driven by machine learning algorithms that aim at simulating a human-human like conversation with a user by either taking input as text or speech from the user.Perhaps you have heard this term and wondered: what is this chatbot, what is it used for, do I really need one, how can I create one? If you just want to build your own simple chatbot, this article will take you through all the steps in creating one for yourself.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed